I used to think how to automate local SEO meant giving up control. It doesn’t. For local businesses, the real win is replacing the 3-hour weekly content scramble with a system that finds local search terms, writes the post, and publishes it before your competitors even finish their keyword list. We build that kind of workflow for sites that need fresh pages every day, not every quarter.

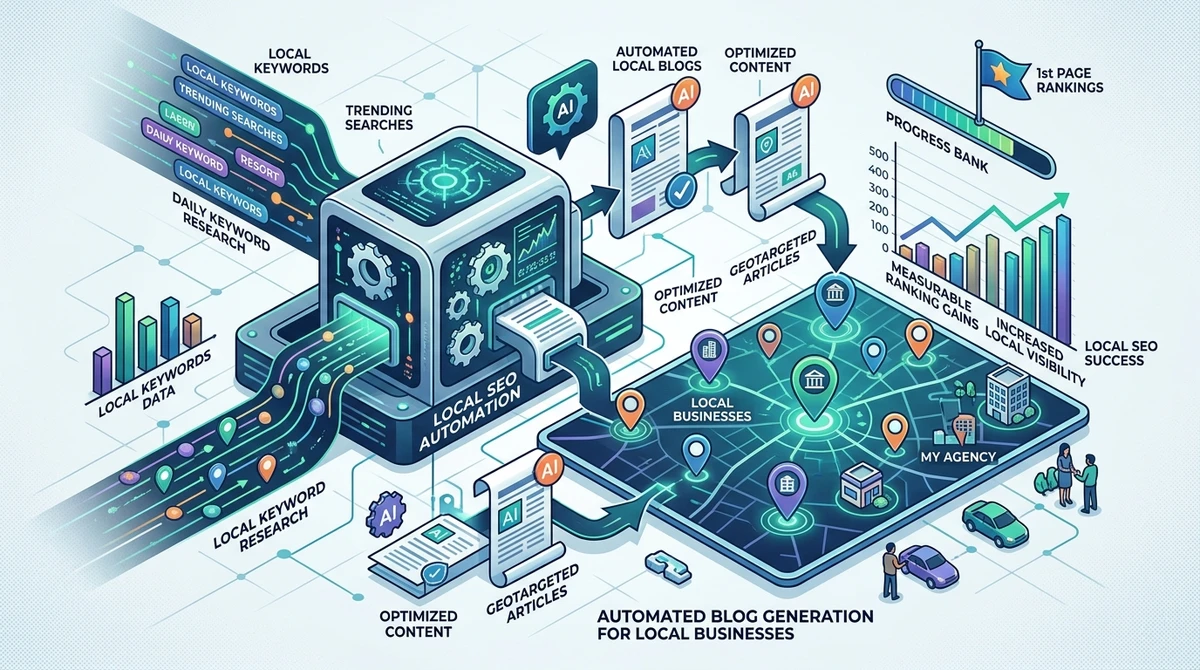

Automated local SEO refers to a repeatable system that discovers nearby search demand, creates optimized content, and publishes it on your domain without manual posting. For a plumber in Phoenix, that might mean one article about water heater repair in Ahwatukee today, then one about drain cleaning in Tempe tomorrow, each tied to real local intent.

This article shows what to automate, what not to automate, and how teams use daily content without turning the site into generic AI noise.

What should you automate first?

The first thing to automate is keyword discovery, because content built on guesswork wastes the next step. If you already know how to find local keywords, the rest of the system gets much easier: research, writing, publishing, and measurement can all run on the same cadence.

- Collect location-specific queries from Google Search Console, Google Business Profile, and your service pages.

- Group them by intent, such as emergency, comparison, neighborhood, or pricing searches.

- Feed those clusters into a publishing queue so each article targets one search need.

- Track impressions and clicks after 14 to 21 days, then refine the next batch.

Keyword → intent → article → publish → measure is the chain I use when a local site needs scale without chaos. For a roofing company, that can mean turning one keyword list into 30 posts in a month, each aimed at a real service area instead of a vague topic bucket.

That sequence matters because local rankings usually fail at the input stage, not the writing stage.

How does daily publishing change local rankings?

Daily publishing helps because local search is often freshness-sensitive, especially when competitors are adding service pages, blog posts, and location signals at the same time. I’m not saying one post a day magically lifts every page. I am saying consistency gives search engines more crawlable surface area, more topical clues, and more chances to match a query to your domain.

Daily SEO blog posts increase the odds that one of your pages lands on a long-tail query your team would never have manually prioritized. For example, a landscaping company in Austin might not write about “Buda native plant drainage” by hand, but an automated system can spot that query, publish it, and capture a customer who is ready to call.

According to Google’s guidance on helpful content, the point is not volume alone, it’s usefulness. Daily posting works when each article answers a distinct local question better than the thin pages already ranking.

The contrast is simple: one careful post a month builds slowly, while 30 tightly matched posts can reveal which neighborhoods, services, and modifiers actually convert.

What is automated SEO content, really?

The best answer is blunt: automated SEO content is only useful when the automation is doing the repetitive work, not the thinking. In practice, that means the system handles research, draft creation, internal linking suggestions, and publishing, while the strategy still comes from the business rules you set upfront. That’s the difference between a useful engine and a content spammer.

Automated blog content vs manual is not a quality debate so much as a throughput debate. Manual writing gives you deep control, but it usually caps production at 2 to 4 solid posts a week if you’re also running a business. Automation pushes that limit higher, often to daily output, which matters when your competitors are publishing faster than your team can brief writers.

For example, a multi-location dental practice can automate posts for “teeth whitening in Scottsdale” and “emergency dentist in Mesa,” then hand the final approval to a manager only if they want a review step. The content stays aligned, but the bottleneck disappears.

Automation should remove friction, not judgment. If the system can’t separate a service query from a local informational query, it isn’t ready to publish.

Which parts should stay human?

The parts that affect trust should stay human, because local SEO lives and dies on specificity. I always keep the final review rule simple: if the article mentions a service area, a regulation, a price, or a time promise, a human should verify it before it goes live.

Write with automation, approve with context. That keeps the site fast without making it careless. A pool service company, for instance, can automate a post about weekly maintenance in Chandler, but a person should confirm whether the service area is accurate, the seasonal advice fits the market, and the CTA matches the actual offer.

Here’s the practical filter I use:

- Automate topic discovery when you need volume.

- Automate draft generation when the keyword set is clear.

- Keep human review for pricing, compliance, location claims, and brand tone.

- Use analytics review weekly, not monthly, if you’re publishing daily.

That split is what makes the system durable. Without it, you get speed for two weeks and cleanup for two months.

The teams that win here usually don’t write better first drafts, they build better guardrails.

How do you measure whether it’s working?

You measure it by comparing crawl growth, impressions, and leads over a 30 to 90 day window, not by staring at one keyword. The clearest signal is usually a steady rise in indexed pages plus a widening set of queries in Google Search Console. If the site gains 40 new relevant impressions a day after week three, that matters more than one vanity ranking spike.

SEO Growth = Intent Match x Publishing Consistency is the formula I use because one weak side cancels the other. If the content is relevant but irregular, Google gets little to work with. If it’s frequent but generic, the pages get indexed and ignored.

According to Pew Research Center’s internet research, people still rely heavily on search for practical local decisions, which is why service pages and locally tuned articles can affect real lead flow, not just traffic.

Example: a pest control company published one daily article for 45 days, then saw branded queries rise and calls shift from one service page to three neighborhood pages. That kind of spread is the point, because local demand rarely sits in a single keyword.

What usually breaks automated local SEO?

The most common failure is over-automation without local proof. The system keeps publishing, but the articles read like they could belong to any city, any trade, any customer. That usually happens when teams skip the local keyword layer and jump straight to drafting.

Local relevance beats content volume every time. A page about “best HVAC tips” will not perform like a page about “how to prep your Scottsdale AC for July heat” because the second phrase maps to a real situation, season, and market.

- No service-area specificity in the title or first paragraph.

- No neighborhood, city, or use-case variation in the keyword set.

- No internal linking to money pages.

- No review cycle for regulated or seasonal claims.

One concrete failure I see often: a franchise publishes 90 AI posts, but all 90 target broad terms. The site gets more pages, not more qualified leads. The fix is smaller and sharper, not bigger.

That’s why the local layer has to be first-class input, not an afterthought added during editing.

How RankOrg fits into a daily content system

If you want the short answer, we built RankOrg to do the repetitive work end to end: discover local keywords, generate SEO blog posts, and publish them on the client’s domain every day. That matters for local businesses and agencies that don’t want to manage CMS setup, writer coordination, or posting calendars.

One article a day is enough when the system keeps feeding the site queries your customers actually type. For a niche contractor, that can mean a stream of localized posts around neighborhoods, services, and seasonal problems instead of one-off articles that never connect to revenue.

- We identify local search terms that match buyer intent.

- We generate the article in SEO format.

- We publish it automatically on the site.

- We keep the cadence going so the domain stays fresh.

That’s the real shift: not “more content,” but a machine that keeps your site active while you stay focused on sales, operations, or client delivery.

When the content engine finally runs without weekly babysitting, you stop asking whether you can post more and start asking which local searches you want to own next.

How do you keep the system credible over time?

Use a simple rule: every article must answer a searcher’s local question in one pass. If it needs three paragraphs to say what the customer wanted in one sentence, the topic is probably too broad or too thin. The strongest automated systems I’ve seen behave like a disciplined newsroom, not a content factory.

Content formula: local intent + real scenario + clear action. For example, “late-night drain backup in Tempe” is stronger than “drain cleaning tips” because the reader, the place, and the urgency are all visible at once.

That’s also why I like using a weekly checkpoint:

- Review top-impression posts.

- Check whether the local modifier is actually present.

- Confirm the CTA matches the service page.

- Archive topics that attract clicks but no leads.

If you keep those checkpoints tight, daily publishing stays useful instead of noisy.

That’s the point we kept running into while building RankOrg, and it’s still the line I’d hold for any local site that wants volume without losing trust.

What should you automate first?

Start with keyword discovery and publishing cadence. Those two pieces create the fastest path to useful volume because they decide what gets written and how often it reaches the site. If you automate writing before you automate topic selection, you get speed aimed at the wrong query set, which is how most local content systems waste their first month. A practical setup for a small service business is simple: one location list, one service list, one daily article queue, and one weekly review of Search Console. That’s enough to uncover patterns like which neighborhoods search after business hours, which services spike by season, and which terms lead to form fills instead of just traffic. In my experience, the site starts showing clearer query clusters after about 3 weeks, then the lead-quality signal becomes easier to read around day 30. The system doesn’t need to be fancy. It needs to be accurate, frequent, and tied to the search phrases your customers already use.

How do you know if daily posts are helping?

Look for three signals at once: indexed pages, query variety, and lead quality. A lot of teams watch rankings too early and miss the real pattern. I’d rather see a site move from 12 ranking queries to 48 ranking queries in 60 days than watch one keyword bounce between positions 8 and 14. That wider footprint usually means the site is matching more local intents, which is what eventually feeds service-page traffic. The easiest example is a home services company that publishes daily posts about specific neighborhoods, seasonal fixes, and urgent problems. After 30 to 45 days, the business often sees more branded searches, more page-specific impressions, and a better spread of calls across the site, not just the homepage. If you want one rule of thumb, use this: if content is only driving impressions but no calls after 6 to 8 weeks, the topics are probably too broad or the CTA is too weak. The measurement has to reflect business outcomes, not just visibility.

FAQ

How long does it take to automate local SEO?

Most teams can set up the workflow in days, but the search signal usually needs 3 to 6 weeks to show a reliable pattern. That window gives Google time to crawl the new pages, connect them to local intent, and surface early impressions. If the site already has some authority, the first changes can show sooner. If the domain is new, I’d expect a slower read. The important part is consistency, because a daily publishing cadence creates a much cleaner test than random bursts of content.

Is automated blog content better than manual writing?

It’s better when the goal is consistent local coverage at scale, and manual writing is better when a single page needs deep subject nuance. I use automation for the repeatable layers, keyword discovery, draft creation, and publishing, then keep humans on the pieces that require judgment. For local SEO, that split usually wins because search demand changes too fast for fully manual schedules to keep up. A small agency managing 15 clients can feel the difference within a month.

Can automated SEO content hurt a local site?

Yes, if the system publishes generic pages with no local intent or quality control. The risk isn’t automation itself, it’s bad inputs and no review. If every post sounds like it could belong to any city, the site loses trust fast. I’d rather publish fewer, sharper articles tied to real service areas than flood the domain with thin copy. That’s the difference between a content engine and a cleanup project.